Combining data from separate but concurrent runs

Here we will look at XGM (X-ray Gas Monitor) data that was recorded in the same short time interval, but in different parts of EuXFEL. We will compare an XGM in SASE1 (XTD2) to another one in SASE3 (XTD10). These data were stored in two different runs, belonging to two different proposals.

Conceptually, this section makes use of the data-object format xarray.DataArray.

[1]:

%matplotlib inline

import matplotlib.pyplot as plt

import numpy as np

import xarray as xr

from extra_data import open_run

SASE1

Load the SASE1 run:

[2]:

sa1_data = open_run(proposal=700000, run=8)

sa1_data.info()

# of trains: 6296

Duration: 0:10:29.6

First train ID: 38227866

Last train ID: 38234161

0 detector modules ()

1 instrument sources (excluding detectors):

- SA1_XTD2_XGM/XGM/DOOCS:output

0 control sources:

We are interested in fast, i.e. pulse-resolved data from the instrument source SA1_XTD2_XGM/DOOCS:output.

[3]:

sa1_data['SA1_XTD2_XGM/XGM/DOOCS:output'].keys()

[3]:

{'data.intensityTD'}

We are particularly interested in data for quantity “intensityTD”. The xarray DataArray class is suited for work with axis-labeled data, and the extra_data method get_array() serves the purpose of shaping a 2D array of that type from pulse-resolved data (which is originally stored “flat” in terms of pulses: there is one dimension of N(train) x N(pulse) values in HDF5, and the same number of train and pulse identifiers for reference). The unique train identifier values are taken as

coordinate values (“labels”).

[4]:

sa1_flux = sa1_data['SA1_XTD2_XGM/XGM/DOOCS:output', 'data.intensityTD'].xarray()

print(sa1_flux)

<xarray.DataArray 'SA1_XTD2_XGM/XGM/DOOCS:output.data.intensityTD' (trainId: 6295, dim_0: 1000)>

array([[2.0451287e+03, 7.8204414e+01, 1.9644452e+03, ..., 1.0000000e+00,

1.0000000e+00, 1.0000000e+00],

[2.0914644e+03, 4.2423668e+01, 1.9155820e+03, ..., 1.0000000e+00,

1.0000000e+00, 1.0000000e+00],

[1.8729647e+03, 4.3682529e+01, 1.9840254e+03, ..., 1.0000000e+00,

1.0000000e+00, 1.0000000e+00],

...,

[1.6113424e+03, 5.5693771e+01, 1.8114177e+03, ..., 1.0000000e+00,

1.0000000e+00, 1.0000000e+00],

[1.5365902e+03, 6.4186798e+01, 1.6430872e+03, ..., 1.0000000e+00,

1.0000000e+00, 1.0000000e+00],

[1.8715566e+03, 5.9838600e+01, 1.7388638e+03, ..., 1.0000000e+00,

1.0000000e+00, 1.0000000e+00]], dtype=float32)

Coordinates:

* trainId (trainId) uint64 38227866 38227867 38227868 ... 38234160 38234161

Dimensions without coordinates: dim_0

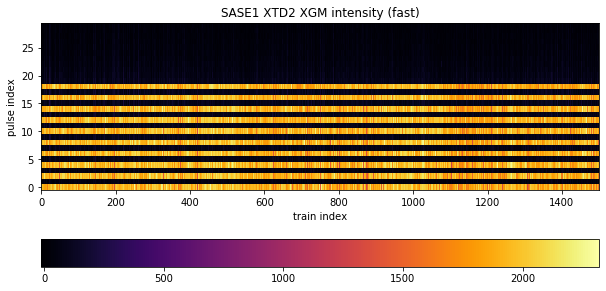

Next, we will plot a portion of the data in two dimensions, taking the first 1500 trains for the x-Axis and the first 30 pulses per train for the y-Axis (1500, 30). Because the Matplotlib convention takes the slow axis to be y, we have to transpose to (30, 1500):

[5]:

fig, ax = plt.subplots(figsize=(10, 6))

image = ax.imshow(sa1_flux[:1500, :30].transpose(), origin='lower', cmap='inferno')

ax.set_title('SASE1 XTD2 XGM intensity (fast)')

fig.colorbar(image, orientation='horizontal')

ax.set_xlabel('train index')

ax.set_ylabel('pulse index')

ax.set_aspect(15)

The pattern tells us what was done in this experiment: the lasing scheme was set to provide an alternating X-ray pulse delivery within a train, where every “even” electron bunch caused lasing in SASE1 and every “odd” bunch caused lasing in SASE3. This scheme was applied for the first 20 pulses. Therefore, we see signal only for data at even pulses here (0,2,…18), throughout all trains, of which 1500 are depicted. The intensity varies somewhat around 2000 units, but for odd pulses it is suppressed and neglegibly small.

mean method can work with DataArray objects and average over a specified dimension.[:, :20:2] to take every element of the slow axis (trains) and every second pulse up to but excluding # 20. That is, start:end:step = 0:20:2 (start index 0 is default, thus not put, and stop means first index beyond range). We specify axis=1 to explicitly average over that dimension. The result is a DataArray reduced to the “trainId” dimension.[6]:

sa1_mean_on = np.mean(sa1_flux[:, :20:2], axis=1)

sa1_stddev_on = np.std(sa1_flux[:, :20:2], axis=1)

print(sa1_mean_on)

<xarray.DataArray 'SA1_XTD2_XGM/XGM/DOOCS:output.data.intensityTD' (trainId: 6295)>

array([1931.4768, 1977.8414, 1873.7828, ..., 1771.5828, 1697.2053,

1857.7439], dtype=float32)

Coordinates:

* trainId (trainId) uint64 38227866 38227867 38227868 ... 38234160 38234161

Accordingly for the odd “off” pulses:

[7]:

sa1_mean_off = np.mean(sa1_flux[:, 1:21:2], axis=1)

sa1_stddev_off = np.std(sa1_flux[:, 1:21:2], axis=1)

print(sa1_mean_off)

<xarray.DataArray 'SA1_XTD2_XGM/XGM/DOOCS:output.data.intensityTD' (trainId: 6295)>

array([96.10835 , 84.489044, 59.212048, ..., 90.2944 , 84.33766 ,

85.03202 ], dtype=float32)

Coordinates:

* trainId (trainId) uint64 38227866 38227867 38227868 ... 38234160 38234161

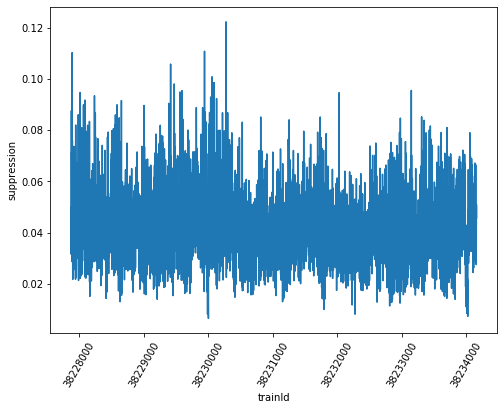

Now we can calculate the ratio of averages for every train - data types like numpy ndarray or xarray DataArray may be just divided “as such”, a shortcut notation for dividing every corresponding element - and plot.

[8]:

fig, ax = plt.subplots(figsize=(8, 6))

sa1_suppression = sa1_mean_off / sa1_mean_on

sa1_suppression.plot(ax=ax)

ax.ticklabel_format(style='plain', useOffset=False)

ax.ticklabel_format()

plt.xticks(rotation=60)

ax.set_ylabel('suppression')

[8]:

Text(0, 0.5, 'suppression')

Moreover, the relative error of this ratio can be calculated by multiplicative error propagation as the square root of the sum of squared relative errors (enumerator and denominator), and from it the absolute error. The Numpy functions “sqrt” and “square” applied to array-like structures perform these operations element-wise, so the entire calculation can be conveniently done using the arrays as arguments, and we obtain individual errors for every train in the end.

[9]:

sa1_rel_error = np.sqrt(np.square(sa1_stddev_off / sa1_mean_off) + np.square(sa1_stddev_on / sa1_mean_on))

sa1_abs_error = sa1_rel_error * sa1_suppression

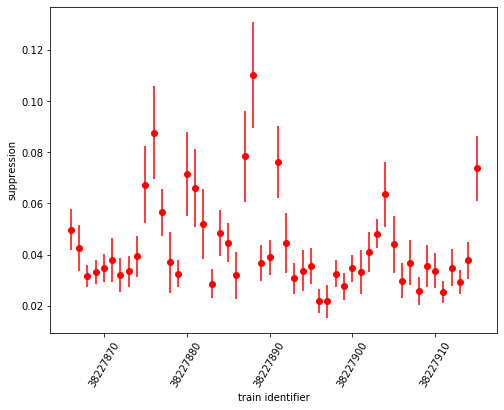

We can as well plot the suppression ratio values with individual error bars according to the respective absolute error. Here, we restrict ourselves to the first 50 trains for clarity:

[10]:

fig, ax = plt.subplots(figsize=(8, 6))

ax.errorbar(sa1_suppression.coords['trainId'].values[:50], sa1_suppression[:50], yerr=sa1_abs_error[:50], fmt='ro')

ax.set_xlabel('train identifier')

ax.ticklabel_format(style='plain', useOffset=False)

plt.xticks(rotation=60)

ax.set_ylabel('suppression')

[10]:

Text(0, 0.5, 'suppression')

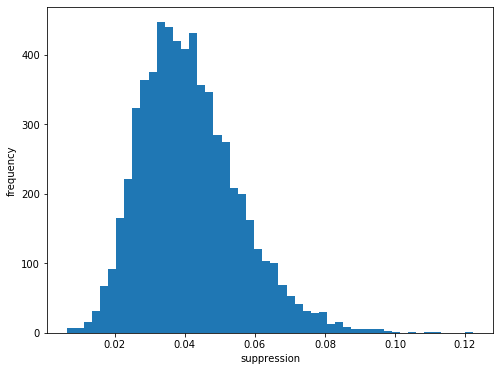

Finally, we draw a histogram of suppression ratio values:

[11]:

fig, ax = plt.subplots(figsize=(8, 6))

ax.hist(sa1_suppression, bins=50)

ax.set_xlabel('suppression')

ax.set_ylabel('frequency')

[11]:

Text(0, 0.5, 'frequency')

We see that there is a suppression of signal from odd pulses to approximately 4% of the intensity of even pulses.

SASE3

We repeat everything for the second data set from the different run - SASE3:

[12]:

sa3_data = open_run(proposal=700000, run=9)

sa3_data.info()

# of trains: 6236

Duration: 0:10:23.6

First train ID: 38227850

Last train ID: 38234085

0 detector modules ()

1 instrument sources (excluding detectors):

- SA3_XTD10_XGM/XGM/DOOCS:output

0 control sources:

[13]:

sa3_flux = sa3_data['SA3_XTD10_XGM/XGM/DOOCS:output', 'data.intensityTD'].xarray()

print(sa3_flux.shape)

(6235, 1000)

[14]:

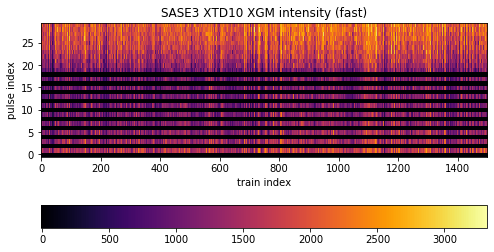

fig, ax = plt.subplots(figsize=(8, 6))

image = ax.imshow(sa3_flux[:1500, :30].transpose(), origin='lower', cmap='inferno')

ax.set_title('SASE3 XTD10 XGM intensity (fast)')

fig.colorbar(image, orientation='horizontal')

ax.set_xlabel('train index')

ax.set_ylabel('pulse index')

ax.set_aspect(15)

The difference here is that the selection scheme (indexing and slicing) shifts by one with respect to SASE1 data: odd pulses are “on”, even pulses are “off”. Moreover, while the alternating scheme is upheld to pulse # 19, pulses beyond that exclusively went to SASE3. There is signal up to pulse # 70, which we could see with a wider plotting range (but not done due to emphasis on the alternation).

[15]:

sa3_mean_on = np.mean(sa3_flux[:, 1:21:2], axis=1)

sa3_stddev_on = np.std(sa3_flux[:, 1:21:2], axis=1)

print(sa3_mean_on)

<xarray.DataArray 'SA3_XTD10_XGM/XGM/DOOCS:output.data.intensityTD' (trainId: 6235)>

array([ 963.89746, 1073.1758 , 902.22656, ..., 883.9881 , 960.5875 ,

889.625 ], dtype=float32)

Coordinates:

* trainId (trainId) uint64 38227850 38227851 38227852 ... 38234084 38234085

[16]:

sa3_mean_off = np.mean(sa3_flux[:, :20:2], axis=1)

sa3_stddev_off = np.std(sa3_flux[:, :20:2], axis=1)

print(sa3_mean_off)

<xarray.DataArray 'SA3_XTD10_XGM/XGM/DOOCS:output.data.intensityTD' (trainId: 6235)>

array([5.435107 , 6.6155367, 8.361802 , ..., 2.3786662, 7.1359987,

4.612433 ], dtype=float32)

Coordinates:

* trainId (trainId) uint64 38227850 38227851 38227852 ... 38234084 38234085

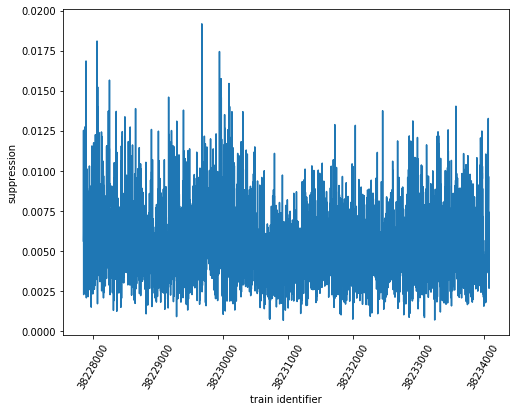

The suppression ratio calculation and its plot:

[17]:

fig, ax = plt.subplots(figsize=(8, 6))

sa3_suppression = sa3_mean_off / sa3_mean_on

ax.plot(sa3_suppression.coords['trainId'].values, sa3_suppression)

ax.set_xlabel('train identifier')

ax.ticklabel_format(style='plain', useOffset=False)

plt.xticks(rotation=60)

ax.set_ylabel('suppression')

[17]:

Text(0, 0.5, 'suppression')

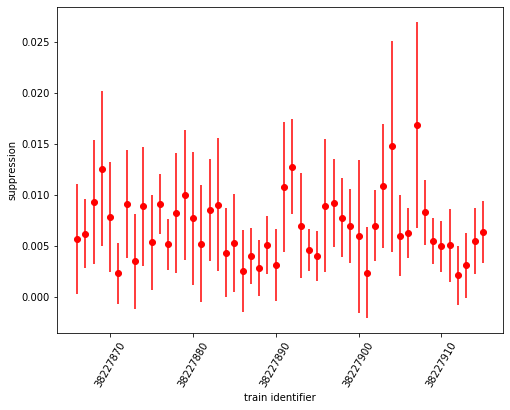

The error calculation with (selective) plot

[18]:

fig, ax = plt.subplots(figsize=(8, 6))

sa3_rel_error = np.sqrt(np.square(sa3_stddev_off / sa3_mean_off) + np.square(sa3_stddev_on / sa3_mean_on))

sa3_abs_error = sa3_rel_error * sa3_suppression

ax.errorbar(sa1_suppression.coords['trainId'].values[:50], sa3_suppression[:50], yerr=sa3_abs_error[:50], fmt='ro')

ax.set_xlabel('train identifier')

ax.ticklabel_format(style='plain', useOffset=False)

plt.xticks(rotation=60)

ax.set_ylabel('suppression')

[18]:

Text(0, 0.5, 'suppression')

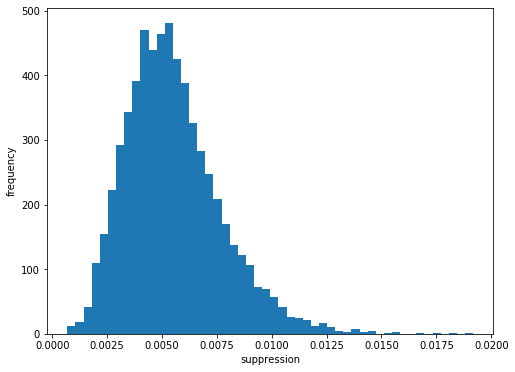

The histogram:

[19]:

fig, ax = plt.subplots(figsize=(8, 6))

ax.hist(sa3_suppression, bins=50)

ax.set_xlabel('suppression')

ax.set_ylabel('frequency')

[19]:

Text(0, 0.5, 'frequency')

Here, suppression of signal for even “off” pulses is to approximately 0.5% of intensity from odd “on” pulses. The “suppression factor” is almost 10 times the value of SASE1. However, the relative error of these values is larger as well, as can be seen in the error-bar plot. For the smaller quantities, it is ~ 100% (!).

Overall comparison of suppression ratio (with error)

[20]:

sa1_on_all = np.array(sa1_flux[:, :20:2]).flatten()

sa1_on_all.shape

[20]:

(62950,)

[21]:

sa1_mean_on_overall = np.mean(sa1_on_all)

sa1_stddev_on_overall = np.std(sa1_on_all)

[22]:

sa1_off_all = np.array(sa1_flux[:, 1:21:2]).flatten()

sa1_off_all.shape

[22]:

(62950,)

[23]:

sa1_mean_off_overall = np.mean(sa1_off_all)

sa1_stddev_off_overall = np.std(sa1_off_all)

[24]:

sa1_suppression_overall = sa1_mean_off_overall / sa1_mean_on_overall

sa1_rel_error_overall = np.sqrt(np.square(sa1_stddev_off_overall / sa1_mean_off_overall) + \

np.square(sa1_stddev_on_overall / sa1_mean_on_overall))

sa1_abs_error_overall = sa1_rel_error_overall * sa1_suppression_overall

print('SA1 suppression ratio =', sa1_suppression_overall, '\u00b1', sa1_abs_error_overall)

SA1 suppression ratio = 0.04107769 ± 0.016009845

[25]:

sa3_on_all = np.array(sa3_flux[:, 1:21:2]).flatten()

sa3_on_all.shape

[25]:

(62350,)

[26]:

sa3_mean_on_overall = np.mean(sa3_on_all)

sa3_stddev_on_overall = np.std(sa3_on_all)

[27]:

sa3_off_all = np.array(sa3_flux[:, :20:2]).flatten()

sa3_off_all.shape

[27]:

(62350,)

[28]:

sa3_mean_off_overall = np.mean(sa3_off_all)

sa3_stddev_off_overall = np.std(sa3_off_all)

[29]:

sa3_suppression_overall = sa3_mean_off_overall / sa3_mean_on_overall

sa3_rel_error_overall = np.sqrt(np.square(sa3_stddev_off_overall / sa3_mean_off_overall) + \

np.square(sa3_stddev_on_overall / sa3_mean_on_overall))

sa3_abs_error_overall = sa3_rel_error_overall * sa3_suppression_overall

print('SA3 suppression ratio =', sa3_suppression_overall, '\u00b1', sa3_abs_error_overall)

SA3 suppression ratio = 0.005213415 ± 0.0040653846

References

Tiedtke et al., Gas-detector for X-ray lasers , J. Appl. Phys. 103, 094511 (2008) - DOI 10.1063/1.2913328

Sorokin et al., J. Synchrotron Rad. 26 (4), DOI 10.1107/S1600577519005174 (2019)

Th. Maltezopoulos et al., J. Synchrotron Rad. 26 (4), DOI 10.1107/S1600577519003795 (2019)